AWS intends to attract more people to develop AI applications and frameworks using Amazon’s Tranium family of accelerators. As part of the new Build on Trainium initiative, with $110 million in funding, academia will be provided with UltraClaster clusters, including up to 40 thousand accelerators, The Register reports.

As part of the Build on Trainium program, it is planned to provide access to the cluster to representatives of universities who are engaged in the development of new AI algorithms that can increase the efficiency of using accelerators and improve the scaling of calculations in large distributed systems. It is not specified which generation of chips, Trainium1 or Trainium2, the clusters will be built on.

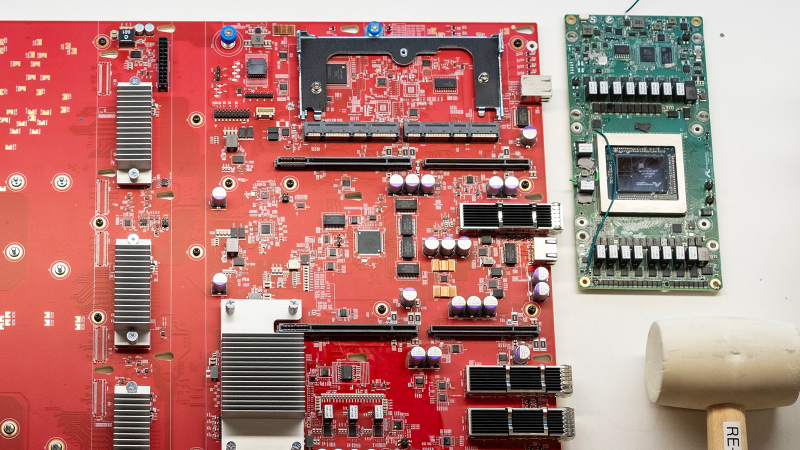

Image source: AWS

As the AWS blog itself explains, researchers may come up with new AI model architectures or new performance optimization technology, but they may not have access to HPC resources for large experiments. Equally important, the fruits of the labor are expected to be distributed through an open source model, so the entire machine learning ecosystem will benefit from this.

However, there is little altruism on the part of AWS. Firstly, $110 million will be issued to selected projects in the form of cloud loans, this is not the first time this has happened. Secondly, the company is actually trying to shift some of its tasks to other people. AWS custom chips, including AI accelerators for training and inference, were originally developed to improve the efficiency of the company’s internal tasks. However, low-level frameworks, etc. The software is not designed to be freely used by a wide range of people, as, for example, is the case with NVIDIA CUDA.

In other words, to popularize Trainium, AWS needs software that is easier to learn, and even better, ready-made solutions for application problems. It is no coincidence that Intel and AMD tend to offer developers ready-made frameworks like PyTorch and TensorFlow optimized for their accelerators, rather than trying to force them to do fairly low-level programming. AWS does the same thing with products like SageMaker.

The project is largely possible thanks to the new Neuron Kernel Interface (NKI) for AWS Tranium and Inferentia, which provides direct access to the chip’s instruction set and allows researchers to build optimized computing kernels for new models, performance optimization and innovation in general. However, scientists – unlike ordinary developers – are often interested in working with low-level systems.