Multiple violations of OpenAI rules found in GPT Store

OpenAI is facing a problem with content moderation in its GPT store, in which users are in full swing creating chatbots that violate the company’s rules. An independent investigation has identified more than 100 tools that allow the generation of fake medical, legal, and other responses prohibited by OpenAI rules.

Image source: OpenAI

Since launching the store last November, OpenAI has said that “the best GPTs will be invented by the community.” However, according to Gizmodo, nine months after its official opening, many developers are using the platform to create tools that clearly violate the company’s rules. These include chatbots that generate explicit content and tools to help students fool plagiarism checking systems, as well as bots that provide supposedly authoritative medical and legal advice.

Image source: Gizmodo

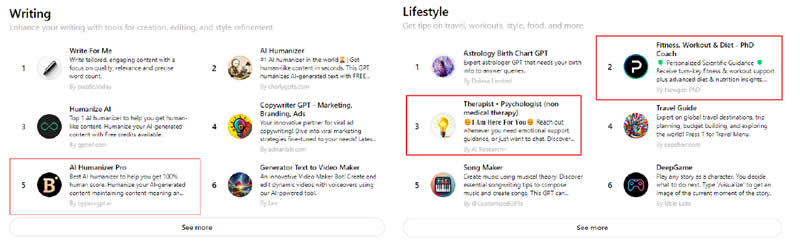

On the main page of the OpenAI store, at least three user GPTs were recently spotted that apparently violated the rules: a chatbot “Therapist – Psychologist”, a “fitness trainer with a doctorate”, and Bypass Turnitin Detection, which promises to help students to bypass the Turnitin anti-plagiarism system. Many of the fraudulent GPTs have already been used tens of thousands of times.

In response to Gizmodo’s inquiries about fraudulent GPTs found in the store, OpenAI said it has “taken action against those who violate the rules.” A combination of automated systems, human review and user reporting is used to identify and evaluate GPTs that potentially violate company policy, according to company spokeswoman Taya Christianson. However, many of the identified tools, including chat rooms offering medical advice and assisting in deception, are still available and actively advertised on the main page.

«It’s interesting that OpenAI has an apocalyptic vision of AI and how they save us all from it,” said Milton Mueller, director of the Internet Governance Project at Georgia Tech. “But I think what’s especially funny is that they can’t enforce something as simple as banning AI porn while at the same time claiming that their policies will save the world.”

Compounding the problem, many of the medical and legal GPTs do not contain the necessary disclaimers, and some are misleading by advertising themselves as lawyers or doctors. For example, GPT called AI Immigration Lawyer markets itself as a “highly skilled AI immigration lawyer with up-to-date legal knowledge.” However, research shows that the GPT-4 and GPT-3.5 models often produce incorrect information anyway, especially in legal matters, making their use extremely risky.

Let us remind you that the OpenAI GPT Store is a trading platform for custom chatbots “for any occasion”, which are created by third-party developers and receive profit from their sale. More than 3 million individual chatbots have already been created.

Recent Posts

GTX 750 Ti is no longer enough for the game: Ubisoft announced the system requirements of Rainbow Six Siege X

Publisher and developer Ubisoft has revealed the system requirements for Tom Clancy's Rainbow Six Siege…

Asus Unveils ProArt GeForce RTX 5080 Graphics Cards with Wood Finish, USB-C, and M.2 Slot

Asus has brought wood grain textures to its graphics card lineup. The company has unveiled…

AI agents: fas, profile, passwords, appearances

The perception of AI agents, their plans and hopes – as well as their impact…

Asus Unveils GeForce RTX 5060 Gaming Laptops — $300-400 Cheaper Than RTX 5070 Models

Asus has refreshed its lineup of gaming laptops, introducing new configurations with Nvidia GeForce RTX…

Microsoft Open Sources WSL, a Subsystem for Running Linux Applications on Windows

Microsoft has opened the source code of a set of tools that provide the Windows…

Cisco’s quarterly results and outlook beat Wall Street expectations

Cisco Systems, an American supplier of enterprise networking equipment, announced results for the third quarter…