The American startup Cerebras Systems, which develops chips for machine learning systems and other resource-intensive tasks, announced the launch of what is claimed to be the world’s most productive AI platform for inference – Cerebras Inference. It is expected that it will seriously compete with solutions based on NVIDIA accelerators.

The Cerebras Inference cloud system is based on WSE-3 accelerators. These giant products, made using TSMC’s 5nm process technology, contain 4 trillion transistors, 900 thousand cores and 44 GB of SRAM. The total bandwidth of the built-in memory reaches 21 PB/s, and the internal interconnect – 214 PB/s. For comparison, a single HBM3e chip in the NVIDIA H200 boasts a throughput of “only” 4.8 TB/s.

Image source: Cerebras

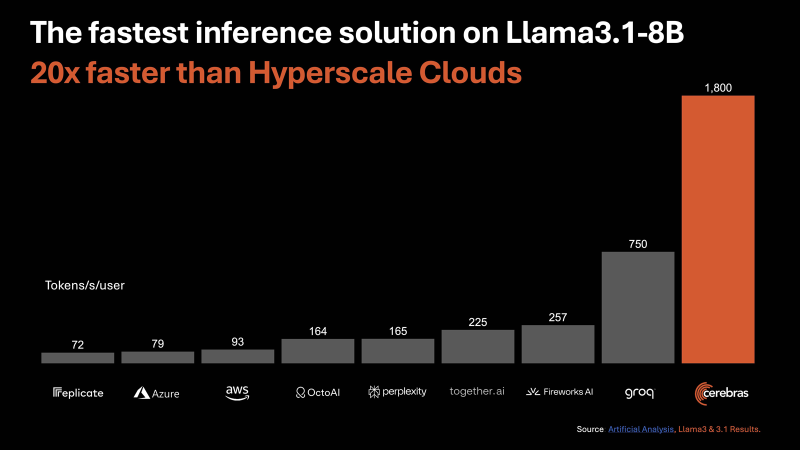

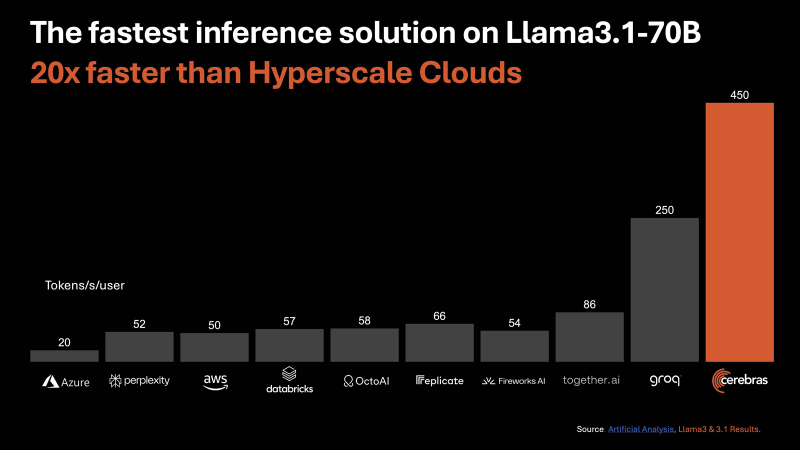

According to Cerebras, the new inference platform provides up to 20 times higher performance compared to comparable solutions on NVIDIA chips in hyperscaler services. In particular, the performance is up to 1800 tokens per second per user for the Llama3.1 8B AI model and up to 450 tokens per second for Llama3.1 70B. For comparison, for AWS these values are 93 and 50, respectively. We are talking about FP16 operations. Cerebras states that the best result for NVIDIA H100 based clusters in the case of Llama3.1 70B is 128 tokens per second.

«Unlike alternative approaches that sacrifice accuracy for speed, Cerebras offers the highest performance while maintaining 16-bit accuracy for the entire inference process,” the company says.

At the same time, Cerebras Inference services cost several times less compared to competing offers: $0.1 per 1 million tokens for Llama 3.1 8B and $0.6 per 1 million tokens for Llama 3.1 70B. Pay as you go. Cerebras plans to provide inference services through an API compatible with OpenAI. The benefit of this approach is that developers who have already built applications based on GPT-4, Claude, Mistral or other cloud AI models will not have to completely change their code to migrate workloads to the Cerebras Inference platform.

For larger businesses, the Enterprise Tier service plan offers highly customized models, customized experiences and dedicated support. The standard Developer Tier package requires a subscription price starting from $0.1 per 1 million tokens. In addition, there is a free entry-level Free Tier access with restrictions. Cerebras says the launch of the platform will open up entirely new opportunities for the implementation of generative AI in various fields.