Elon Musk’s new expensive project, the xAI Colossus supercomputer for artificial intelligence systems, has opened its doors to the public for the first time. Journalists from the ServeTheHome resource were allowed into the facility. They spoke in detail about the cluster on Supermicro servers, the assembly of which took 122 days – it has been running for almost two months.

Image source: servethehome.com

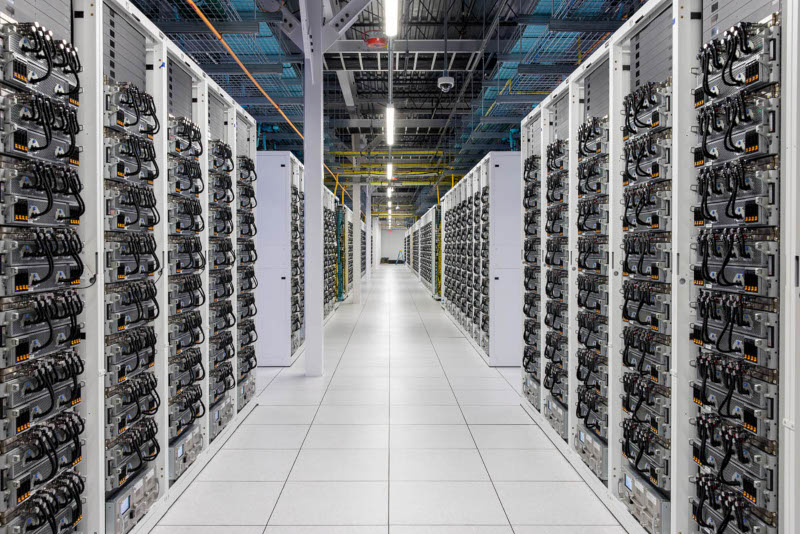

Servers with GPUs are built on the Nvidia HGX H100 platform. Each of them includes eight Nvidia H100 accelerators and a universal Supermicro 4U liquid cooling system with hot-swappable components for each GPU individually. The servers are installed in racks of eight, resulting in 64 accelerators per rack. At the bottom of each rack is another Supermicro 4U unit with a redundant pumping system and rack monitoring system.

The racks are grouped in groups of eight, giving 512 GPUs per array. Each server has four redundant power supplies; in the back of the racks you can see three-phase power supplies and Ethernet switches; there are also rack-sized manifolds that handle liquid cooling. The Colossus cluster contains more than 1500 racks or about 200 arrays. The accelerators on these arrays were installed in just three weeks, Nvidia CEO Jensen Huang said earlier.

Due to the high throughput requirements of the AI supercluster, which continuously trains models, xAI engineers had to make efforts in terms of networking. Each graphics card is equipped with a dedicated 400 GbE network controller with an additional 400 GbE network adapter per server. That is, each Nvidia HGX H100 server has 3.6 Tbps Ethernet – yes, the entire cluster runs on Ethernet, not InfiniBand or other exotic interfaces standard on supercomputers.

The supercomputer requires not only GPUs, but also storage and CPUs to train AI models, including Grok 3, but xAI has only partially disclosed information about them. The censored videos show that servers running on x86 chips in Supermicro cases are responsible for this – they are also equipped with liquid cooling and are designed to work either as data storage or for workloads aimed at central processing units.

Tesla Megapack batteries are also installed at the site. When the cluster is operating, sudden changes in energy consumption are possible, so these batteries, with a capacity of up to 3.9 MWh each, had to be installed between the power grid and the supercomputer as an energy buffer.