Microsoft introduced a new Content Safety feature in the Azure cloud infrastructure – it is aimed at combating failures in the operation of generative artificial intelligence. The feature automatically detects and even corrects errors in the responses of AI models.

Image source: youtube.com/@MicrosoftAzure

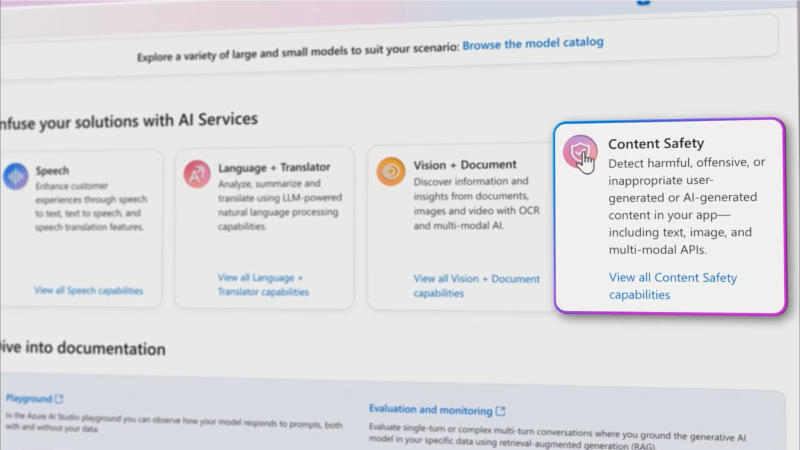

Content Safety is available in preview in Azure AI Studio, a suite of security tools designed to detect vulnerabilities, detect hallucinations in AI systems, and block inappropriate requests from users. Content Safety scans AI responses and identifies inaccuracies in them by comparing the output with the client’s input.

When an error is detected, the system highlights it, provides information about why the information provided is incorrect, and rewrites the problematic content—all before the user can see the inaccuracy. However, this function does not provide a guarantee of reliability. The Google Vertex AI enterprise platform also has a function for “grounding” AI models by checking answers against Google’s search engine, the company’s own data, and, in the future, third-party data sets.